Explainable AI

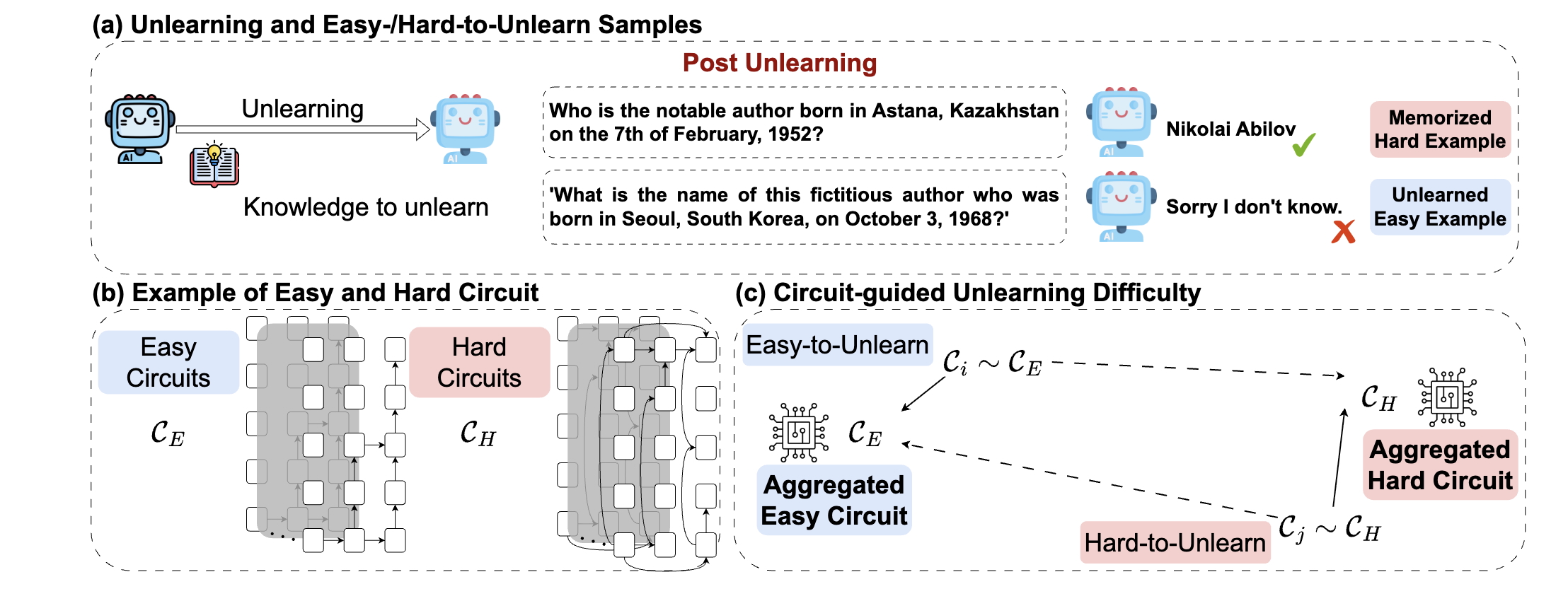

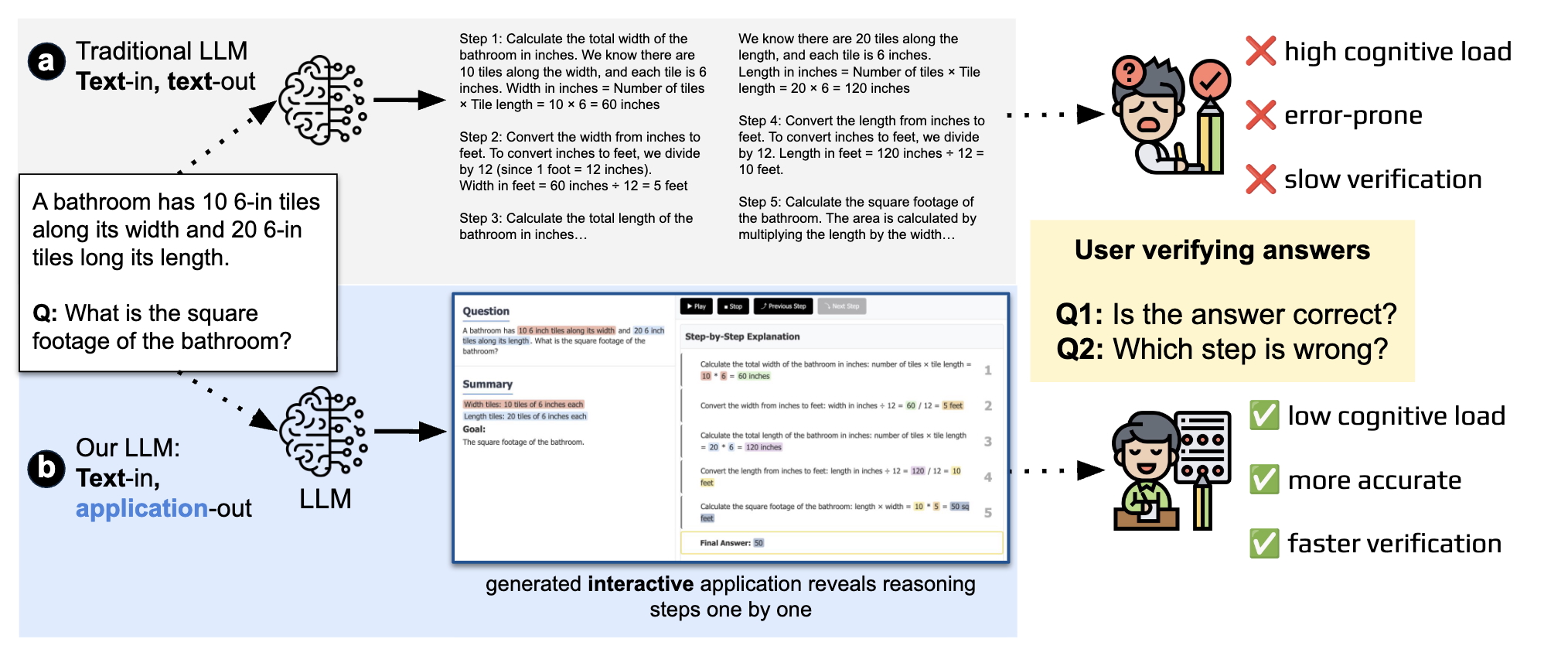

Making AI decisions transparent, interpretable, and actionable for real stakeholders — from concept-level explanations to interpretability agents. Our work spans concept explanations, mechanistic interpretability, model inspection, and interactive explanation interfaces.

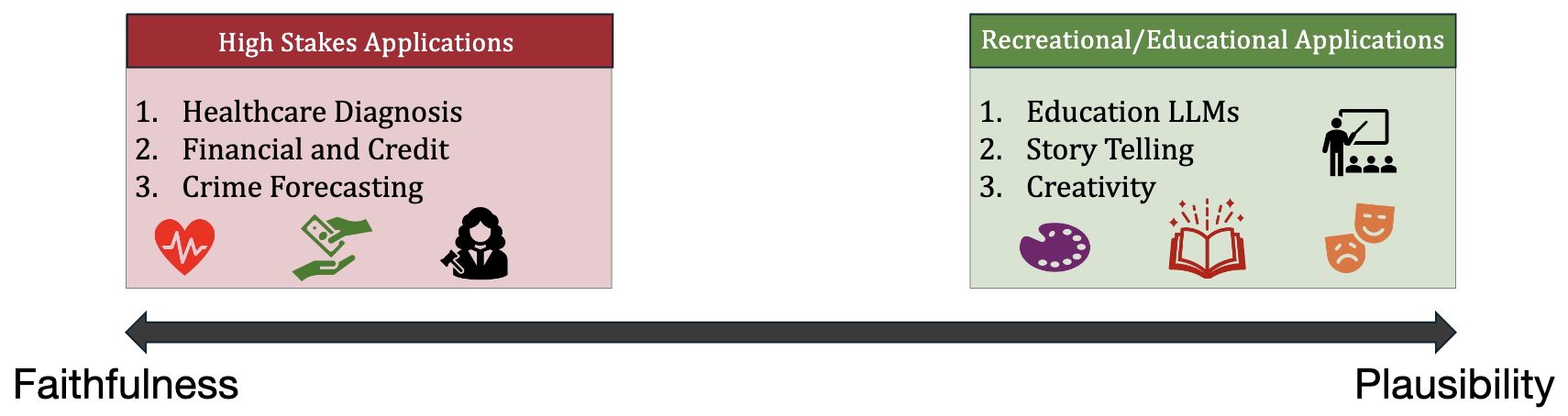

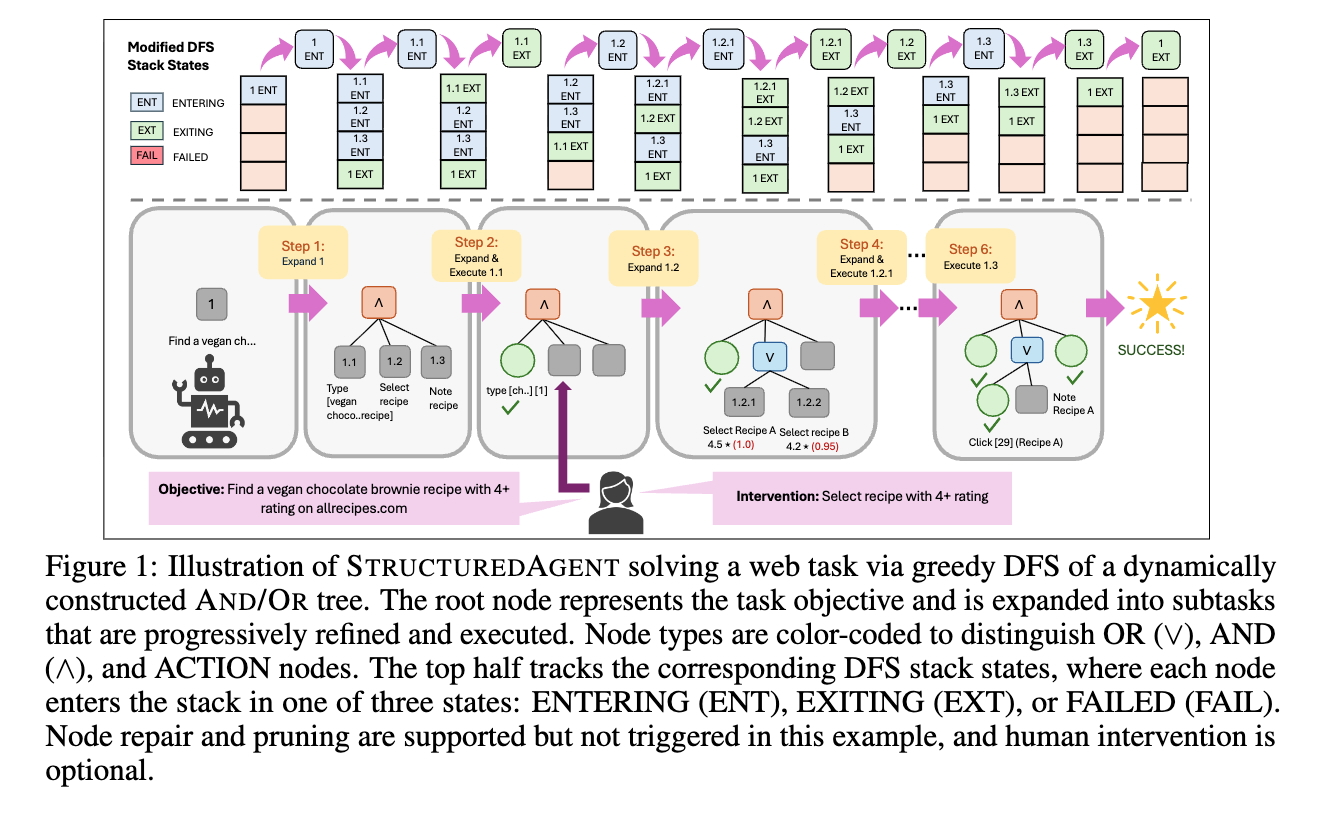

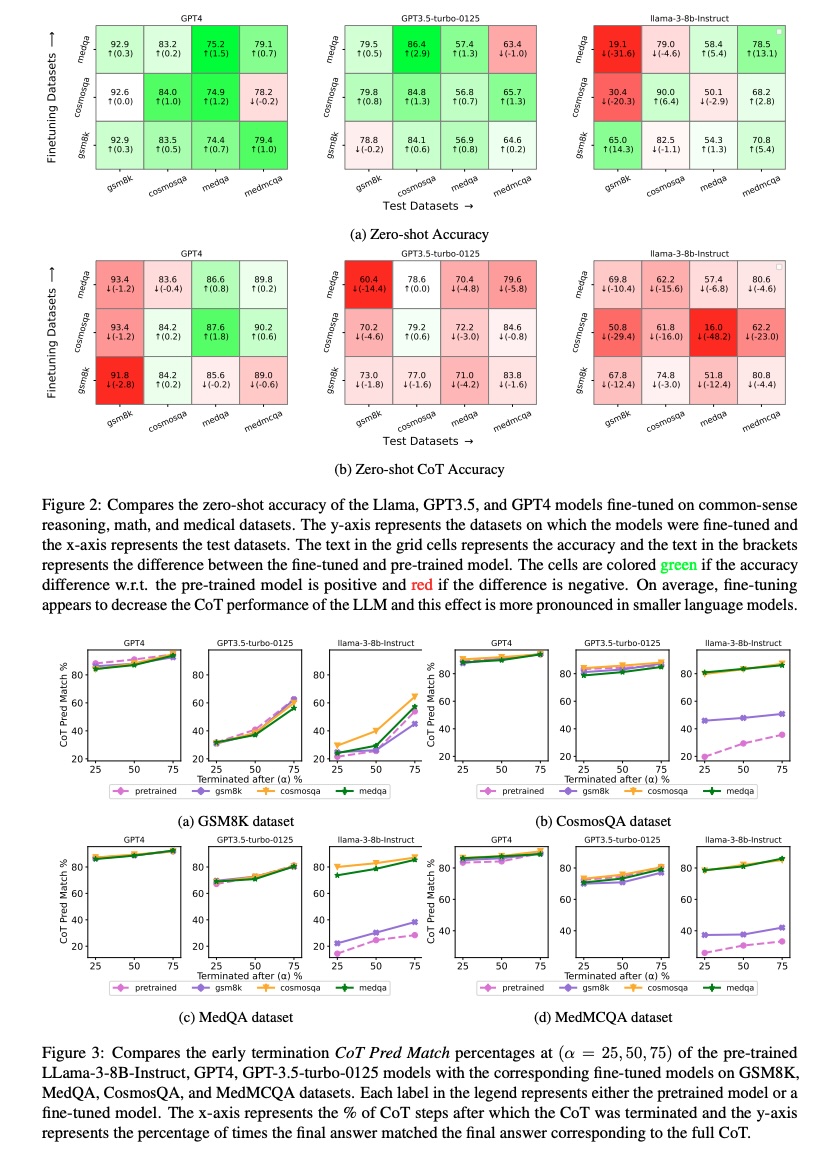

Our work investigates why LLM-generated explanations often appear plausible to humans but may fail to accurately reflect the model's decision-making process. By analyzing the trade-offs between faithfulness (how well an explanation captures the model's reasoning) and plausibility (how convincing it seems), our work proposes strategies to improve explanation quality, such as refining prompting techniques and developing evaluation metrics. In addition, we also tackle the challenge of eliciting faithful Chain-of-Thought (CoT) reasoning in LLMs. Our studies have been cited in OpenAI o1 System Card and reveal the limitations of current approaches like in-context learning, LoRA fine-tuning, and activation editing in guaranteeing accurate reasoning paths, advocating for new architectural designs and training paradigms to enhance transparency in LLMs, paving the way for more reliable decision-making in domains like legal and medical AI.

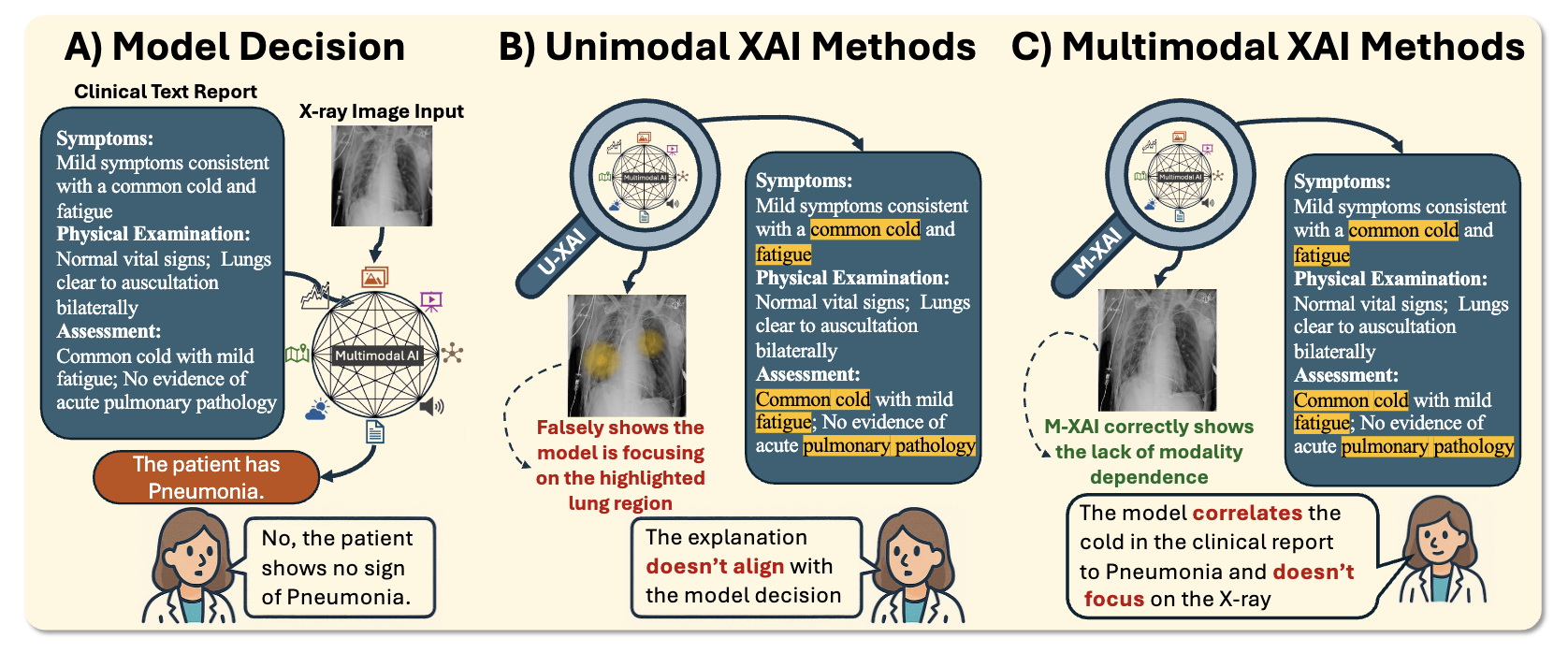

In our work, we develop new explainability algorithms to study the behavior of complex black-box unimodal and multimodal models. The research on multilingual and multimodal explanation methods is at a very nascent stage and we aim to introduce novel algorithms that generate actionable explanations for multimodal models. Through these efforts, our lab aims to shape the future of XAI, ensuring AI systems are both powerful and interpretable, with far-reaching implications for ethical AI deployment.